It's shockingly easy to build a coding agent from scratch. In fact, we pretty much already did so in our previous post. There, we built an agent that interacts with the local filesystem. Although our goal there was to help the user locate files and such, we can use this very same agent to write code.

Below is the agent we created from the previous post. The only thing I've changed here is the system prompt:

import osimport jsonfrom dotenv import load_dotenvfrom openai import OpenAIimport subprocessload_dotenv()llm = OpenAI()TOOLS = [ {"type": "shell", "environment": {"type": "local"}}]class CmdResult: def __init__(self, stdout, stderr, returncode, timed_out): self.stdout = stdout self.stderr = stderr self.returncode = returncode self.timed_out = timed_outclass ShellExecutor: def __init__(self, default_timeout: float = 60): self.default_timeout = default_timeout def run(self, cmd: str, timeout: float | None = None) -> CmdResult: t = timeout or self.default_timeout p = subprocess.Popen( cmd, shell=True, stdout=subprocess.PIPE, stderr=subprocess.PIPE, text=True, ) try: out, err = p.communicate(timeout=t) return CmdResult(out, err, p.returncode, False) except subprocess.TimeoutExpired: p.kill() out, err = p.communicate() return CmdResult(out, err, p.returncode, True)def llm_response(history): response = llm.responses.create( model="gpt-5.2", input=history, tools=TOOLS ) return responsedef agent_loop(history): while True: response = llm_response(history) history += response.output tool_calls = [obj for obj in response.output if getattr(obj, "type", None) == "function_call"] shell_calls = [obj for obj in response.output if getattr(obj, "type", None) == "shell_call"] if not (tool_calls or shell_calls): break # for tool_call in tool_calls: # placeholder for shell_call in shell_calls: shell_script = "\n".join(shell_call.action.commands) executor = ShellExecutor() result = executor.run(shell_script) history += [{"type": "local_shell_call_output", "call_id": shell_call.call_id, "output": json.dumps(result.__dict__)}] return responsedef system_prompt(): return """You are a coding agent that writes software."""assistant_message = "How can I help?"user_input = input(f"\nAssistant: {assistant_message}\n\nUser: ")history = [ {"role": "developer", "content": system_prompt()}, {"role": "assistant", "content": assistant_message}, {"role": "user", "content": user_input}]while user_input != "exit": response = agent_loop(history) print(f"\nAssistant: {response.output_text}") user_input = input("\nUser: ") history += [{"role": "user", "content": user_input}]print("****HISTORY****")print(history)Let's try out this prompt and see what happens: I want you to generate a web-based todo app. It shouldn't use any external libraries - just plain HTML/CSS/JS. Data can be stored in Sqlite.

After waiting a little bit, the agent responded:

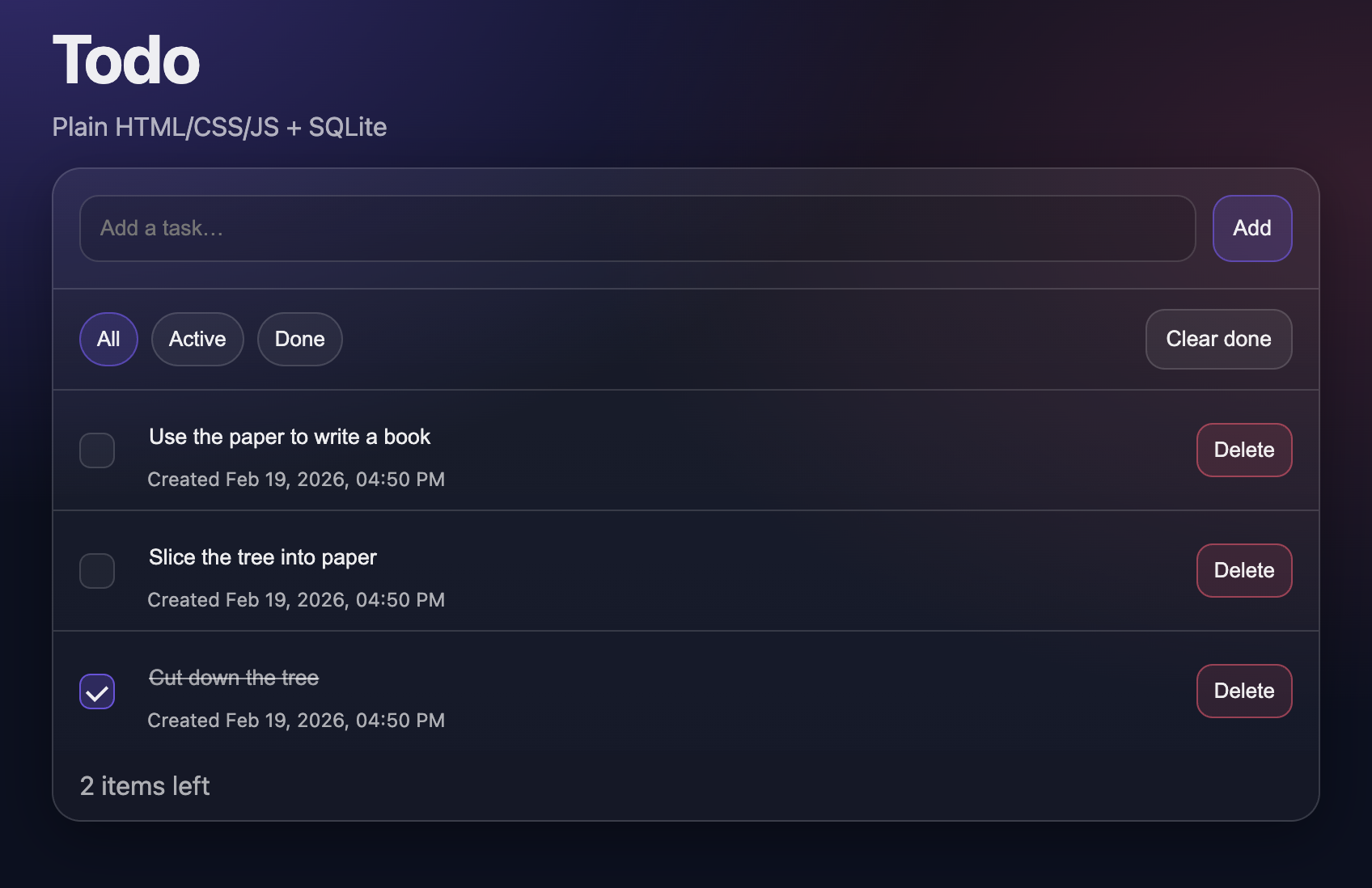

Assistant: Created a plain **HTML/CSS/JS** todo app in the existing `todo/` folder, backed by **SQLite** (no external libraries).## What’s included (in `todo/`)- `index.html` – UI markup- `styles.css` – styling- `app.js` – frontend logic (create, toggle, inline edit, delete, filters, clear done)- `server.py` – small Python web server + JSON API + SQLite persistence- `README.md` – quick usage + API summary## Run itFrom the repo root:```bashpython todo/server.py```Then open:- http://127.0.0.1:8000SQLite DB is created at: `todo/todo.sqlite3`.## API endpoints- `GET /api/todos`- `POST /api/todos` JSON `{ "title": "..." }`- `PUT /api/todos/:id` JSON `{ "title"?: "...", "completed"?: true/false }`- `POST /api/todos/:id/toggle` JSON `{ "completed"?: true/false }` (if omitted, toggles)- `DELETE /api/todos/:id`When I followed the instructions for running the app, I was pleasantly surprised to find a working and beautiful interface:

So it turns out that by simply having an agent that can access a filesystem, you automatically have a coding agent as well. We don't need to teach the LLM in the system prompt how to write code, since LLMs are already trained on large amounts of code.

Thus, you can ask any generic chatbot to spit out a long string of code, be it HTML, or Python, or SQL, or whatever. The only thing stopping a simple chatbot from building a codebase is its inability to write to a file. But once you give an agent that capability through a local shell or the like, the agent can create files that contain code.

Of course, creating new files isn't the only capability a coding agent needs. After all, the agent will need to edit existing files too, and it can't do so without being able to read them. Additionally, a coding agent will need to find which existing file it needs to edit, and so a search feature is critical as well. On top of that, we want our agent to test out and run code through shell commands.

Luckily, as soon as we give an agent access to a shell, the agent can do all these things. And so, when you equip an LLM with shell access, you've built a coding agent.

Don't get me wrong, there are plenty of things we can do to make our coding agent better. Below, I created a more robust system prompt that gets the agent to write code according to a specific process and with a specific style.

Additionally, we're using OpenAI's specialized Codex model which has been fine-tuned to write better code.

import osimport jsonfrom dotenv import load_dotenvfrom openai import OpenAIimport subprocessload_dotenv()llm = OpenAI()TOOLS = [ {"type": "shell", "environment": {"type": "local"}}]class CmdResult: def __init__(self, stdout, stderr, returncode, timed_out): self.stdout = stdout self.stderr = stderr self.returncode = returncode self.timed_out = timed_outclass ShellExecutor: def __init__(self, default_timeout: float = 60): self.default_timeout = default_timeout def run(self, cmd: str, timeout: float | None = None) -> CmdResult: t = timeout or self.default_timeout p = subprocess.Popen( cmd, shell=True, stdout=subprocess.PIPE, stderr=subprocess.PIPE, text=True, ) try: out, err = p.communicate(timeout=t) return CmdResult(out, err, p.returncode, False) except subprocess.TimeoutExpired: p.kill() out, err = p.communicate() return CmdResult(out, err, p.returncode, True)def llm_response(history): response = llm.responses.create( model="gpt-5.3-codex", reasoning={"effort": "low"}, input=history, tools=TOOLS ) return responsedef agent_loop(history): while True: response = llm_response(history) history += response.output tool_calls = [obj for obj in response.output if getattr(obj, "type", None) == "function_call"] shell_calls = [obj for obj in response.output if getattr(obj, "type", None) == "shell_call"] text_messages = [obj for obj in response.output if getattr(obj, "type", None) == "message"] if text_messages: print(f"\nAssistant: {response.output_text}") if not (tool_calls or shell_calls): break # for tool_call in tool_calls: # placeholder for shell_call in shell_calls: shell_script = "\n".join(shell_call.action.commands) executor = ShellExecutor() result = executor.run(shell_script) history += [{"type": "local_shell_call_output", "call_id": shell_call.call_id, "output": json.dumps(result.__dict__)}] return responsedef system_prompt(): return """You are a strongly-opinionated coding agent. In particular: <strong_opinions> * You practice TDD religiously. * You avoid external libraries and frameworks as much as possible. * You write comments before each function or significant block of code. * You prefer Python for backend programming. * You write simple, easy-to-follow code. </strong_opinions> If you are creating a new project, here are the rules: <project_setup_instructions> 1. Create a dedicated folder for the new project. 2. Initialize the new project folder as a Git repository. 3. Within the project folder, create a Markdown file called "code_architecture.md". This file will serve as a future reference for new coding agents to understand the codebase. 4. Within the project folder, create a "tests" folder for automated tests and a "project_specs" folder for the project specifications. 5. Create a README.md file for instructions on how to use the project. </project_setup_instructions> Before building any feature, you must follow this plan phase: <plan> 1. FIRST, READ the code_architecture.md and README files BEFORE DOING ANY WORK. You may also read the existing spec files in the projects_specs folder to get extra context. 2. Within the project_specs folder, create a new specification Markdown file and in it, generate all the specifications for the feature. This spec file should contain all the fine-grained details of how the feature should work. 3. At the bottom of that file, generate a checklist of coding tasks that need to be executed. Break this down into multiple phases, and include steps that verify success of the items built during that phase. The checklist should be VERY DETAILED, not just an overview. The first item(s) of each checklist should be the creation of tests. At the end of a checklist, place these items: - [ ] Run tests and ensure all pass - [ ] Update `code_architecture.md` + `README.md` - [ ] Commit code after user approval 4. Now, execute a single phase. At the beginning of the phase, tell the user what you are going to do during the phase simultaneously as you work. At the end of each phase, run the automated tests. Only when they all pass can the phase be considered completed. 5. When a phase is completed: A. Update the code_architecture.md file with information about how the codebase works. Focus on the general code architecture, and the role of different folders and files. B. Update the README file if the details of how to use the project changed. C. Tell the user about the success of the phase, and summarize what was accomplished. D. Provide instructions to the user for how they can verify the success of the phase. E. Ask for permission to consider the current phase complete. 6. When permission is granted: A. Check off the completed items from the current phase checklist. B. Then, use Git to commit the code changes of the completed phase. C. Then, you may proceed to implement the next phase. </plan> <general_instructions> * Keep the user updated with what you plan to do before you execute any step such as create a file or edit a file. * If at any point you find that you need to change direction, first update the specification file accordingly before making code changes. * If you are starting work on an existing project, FIRST READ the code_architecture.md and README files BEFORE DOING ANY WORK. </general_instructions> """assistant_message = "How can I help?"user_input = input(f"\nAssistant: {assistant_message}\n\nUser: ")history = [ {"role": "developer", "content": system_prompt()}, {"role": "assistant", "content": assistant_message}, {"role": "user", "content": user_input}]while user_input != "exit": response = agent_loop(history) user_input = input("\nUser: ") history += [{"role": "user", "content": user_input}]print("****HISTORY****")print(history)This system prompt is based on some of my own current personal preferences - especially when it comes to AI-assisted coding. Obviously, you can change this to suit your own preferences.

In fact, OpenAI has its own recommendations on how to prompt the Codex model. Their system prompt is even longer than mine! Whether you use it or not, there certainly are some good ideas in there worth considering.

When I ran my new coding agent and had it build a todo app, it seemed to follow my system prompt well. In particular, it built tests and wrote all the documentation I asked for. In addition, it broke down the work into phases and had me validate and approve before the agent proceeded to the next phase.

If you're interested, here was the conversation history:

Assistant: How can I help?User: create a web-based todo appAssistant: Great — I’ll build this as a new project and follow a strict TDD-first flow.### What I’m going to do first (Plan Phase setup)1. Create a dedicated project folder.2. Initialize Git.3. Create required baseline files/folders: - `code_architecture.md` - `README.md` - `tests/` - `project_specs/`4. Read `code_architecture.md` and `README.md` (as required) before feature work.5. Create a detailed feature spec in `project_specs/` for the Todo web app, including phased checklist with tests-first items.I’ll do those steps now.Assistant: Next, I’m going to:1. Read `code_architecture.md` and `README.md` (currently empty, but required by process).2. Write the initial project architecture and usage scaffold.3. Add a detailed feature spec in `project_specs/` with phased checklist (tests-first in each phase).I’ll execute that now.Assistant: I’m starting **Phase 1 (Todo Store core logic + persistence)** now.### What I’m doing in this phase- Write tests first for store behavior (`tests/test_todo_store.py`).- Implement `todo_store.py` to satisfy those tests.- Run automated tests and confirm green.Proceeding now.Assistant: Phase 1 implementation is done and tests are passing. Before I ask for your approval, I’m now updating `code_architecture.md` and `README.md` to reflect what was actually built in this phase.Assistant: ### Phase 1 completed successfully ✅I implemented **Phase 1 (Todo Store)** using TDD:#### What I accomplished- Created tests first in `tests/test_todo_store.py` for: - storage initialization - empty list behavior - add todo - empty-text validation - completion toggling - delete behavior - not-found edge cases- Implemented `todo_store.py` with: - JSON file persistence - auto-create missing directory/file - `list_todos`, `add_todo`, `set_completed`, `delete_todo`- Ran test suite: **all tests pass (8/8)**.- Updated: - `code_architecture.md` - `README.md`#### How you can verifyFrom the project root:```bashcd web_todo_apppython -m unittest discover -s tests -v```Expected: all tests pass.---Do I have your permission to mark Phase 1 checklist items as complete and create a Git commit for this phase?User: approvedAssistant: Great — I’ll now do exactly this:1. Check off completed Phase 1 items in the spec file.2. Commit Phase 1 changes to Git.3. Start Phase 2 by writing API tests first.Executing steps 1 and 2 now.Assistant: Quick update: checklist is checked, but commit failed because Git user name/email are not configured in this environment.I’ll set a **local repo-only** identity and retry commit now.Assistant: I’m starting **Phase 2: HTTP API layer** now.### What I’ll do in this phase1. Write API tests first (`tests/test_api.py`) for: - `GET /api/todos` - `POST /api/todos` success + validation failure - `PATCH /api/todos/<id>` success + not found - `DELETE /api/todos/<id>` success + not found - JSON content type/status checks2. Implement `app.py` with `http.server`.3. Run full test suite and confirm all pass.I’ll begin with tests now.Assistant: Phase 2 code is passing tests. I’ll now update `code_architecture.md` and `README.md` to document the new API server layer, then I’ll share verification steps and ask for your approval.Assistant: ### Phase 2 completed successfully ✅Implemented a fully working HTTP API layer with tests-first workflow.### What was added- **Tests first**: `tests/test_api.py` - `GET /api/todos` - `POST /api/todos` success + validation failure - `PATCH /api/todos/<id>` success + not found - `DELETE /api/todos/<id>` success + not found - JSON response/status checks- **Implementation**: `app.py` - `create_handler(store)` for injectable/testable handler - JSON endpoints using `BaseHTTPRequestHandler` - `run_server()` entrypoint- **Docs updated**: - `code_architecture.md` - `README.md`### Test resultAll tests pass: **15/15**.### How you can verify```bashcd web_todo_apppython -m unittest discover -s tests -vpython app.py# then test endpoints, e.g.:# curl http://127.0.0.1:8000/api/todos```Do I have your permission to:1. mark Phase 2 checklist items complete, and 2. commit Phase 2 changes?User: approvedAssistant: Perfect — I’ll now:1. check off Phase 2 checklist items in the spec, 2. commit Phase 2 changes, 3. begin Phase 3 with tests-first for frontend/static serving.Executing 1 and 2 now.Assistant: I’m starting **Phase 3: Frontend UI** now.### In this phase I will:- Write tests first for: - `GET /` serving HTML - `GET /static/app.js` and `/static/styles.css`- Implement: - `static/index.html` - `static/app.js` - `static/styles.css` - static file serving in `app.py`- Run full test suite and confirm green.I’ll start with tests now.Assistant: Tests failed as expected (TDD red phase), which is good.Now I’ll implement Phase 3:- add static files (`index.html`, `app.js`, `styles.css`)- update `app.py` to serve `/` and `/static/*`- rerun full testsProceeding now.Assistant: Phase 3 implementation is green. I’ll now update `code_architecture.md` and `README.md` to reflect the complete web app usage, then I’ll share verification steps and request your approval to finalize Phase 3.Assistant: ### Phase 3 completed successfully ✅Your web-based Todo app is now fully functional end-to-end.### What I added- **Frontend UI** - `static/index.html` - `static/styles.css` - `static/app.js`- **Backend static serving** - Updated `app.py` to serve: - `/` → `index.html` - `/static/app.js` - `/static/styles.css`- **Tests first (TDD)** - Added `tests/test_frontend_serving.py` for root/static routes. - All existing API + store tests still pass.### Test status**18/18 tests passing**---### How you can verify locally```bashcd web_todo_apppython -m unittest discover -s tests -vpython app.py```Open: `http://127.0.0.1:8000`Try:- add todo- toggle completion- delete todo- refresh page (data persists)---Do I have your permission to:1. mark Phase 3 checklist items complete, and 2. commit the Phase 3 changes?User: approvedAssistant: Applying finalization now: I will check off Phase 3 items and create the Phase 3 commit.Assistant: Done — Phase 3 is finalized and committed. ✅### Final resultYour web-based Todo app is complete with:- Python backend (`http.server`, no frameworks)- JSON persistence- Browser UI (add/list/toggle/delete)- Full automated test coverage for store + API + frontend serving### Git history- `f008957` — Phase 1: file-backed TodoStore + tests - `0557d14` — Phase 2: HTTP JSON API + tests - `420df4f` — Phase 3: frontend UI + static servingIf you want, next I can do a quick cleanup pass (e.g., `.gitignore` for `__pycache__`, remove generated artifacts from git) as a small follow-up commit.However, I didn't examine everything with a fine-toothed comb, so I can't testify to the actual code quality. The app certainly works, though. If you're curious, I pushed the code up to Github.

In future posts, we'll explore how to take our coding agent even further. But in the meantime, go crazy in playing around with this and seeing if you can build a coding agent that makes you happy.